The Trillion Dollar MacGuffin

On AGI, incentives, and a lead statue of a bird

“It is difficult to get a man to understand something when his salary depends upon his not understanding it.” — Upton Sinclair

An Apparatus for Trapping Lions in the Scottish Highlands

In March 1939, Alfred Hitchcock gave a lecture at Radio City Music Hall, organized by the Museum of Modern Art and Columbia University. He was explaining suspense mechanics to a roomful of filmmakers—how to structure a melodrama, how to keep an audience’s mind occupied, how to make them suffer productively. He opened with a joke about his notes being mixed up with a letter from his mother.

Somewhere in the Q&A, a question came up about a term he’d been using in the studio: a “MacGuffin”—the mechanical element that crops up in any story,[1] the thing that the characters are motivated by, but that the audience doesn’t care about. He’d spend the next three decades explaining it with the same joke:

Two men on a train in Scotland. One asks about a package on the luggage rack. “What’s that?” “It’s a MacGuffin.” “What’s a MacGuffin?” “It’s an apparatus for trapping lions in the Scottish Highlands.” “But there are no lions in the Scottish Highlands.” “Well then, that’s no MacGuffin.”

To me, this captures the confusing nature of a MacGuffin. Every MacGuffin has a sort of vagueness—an uncertainty about just what exactly it is, and why people respond to it the way they do. Put simply, a MacGuffin is the thing that drives all the action in a story, but the details of the thing itself are irrelevant. The characters care about it desperately. The audience only need know that they want it badly.

If you pay attention to AI business reporting, you may already have noticed that this sounds a lot like Artificial General Intelligence. But first—Dashiell Hammett.

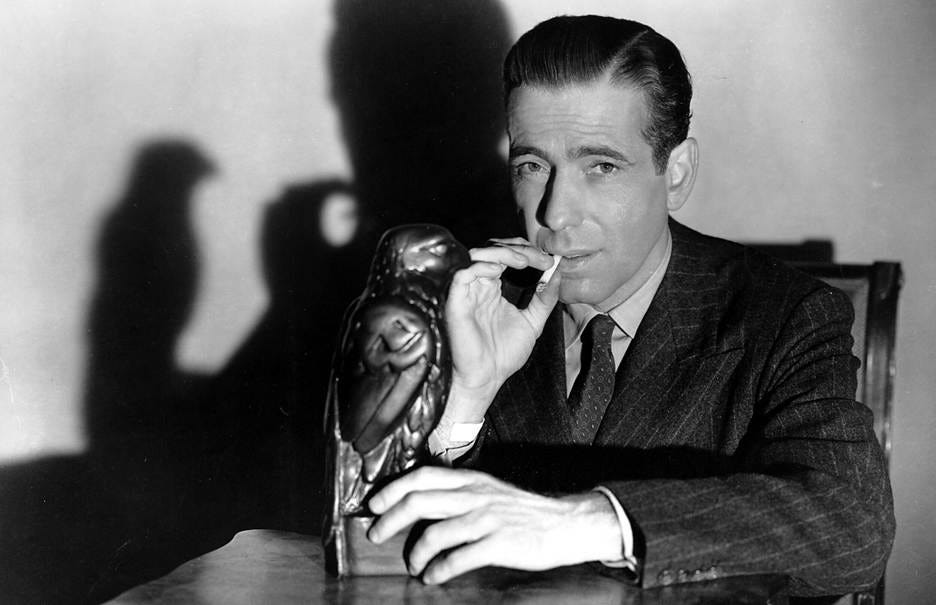

In The Maltese Falcon everybody lies, betrays, and kills to possess the titular jewel-encrusted statuette they believe is worth a fortune. Gutman has spent seventeen years chasing it. People kill and die for it. And when they finally get it, Gutman scratches the surface and finds lead.[2] It’s a fake. The entire chase—the murders, the money, the decades of obsession—was organized around something that proved to be worthless. It is the quintessential MacGuffin.

AGI could be the most valuable technology ever created. It could be lead. What I can tell you is that over a trillion dollars in combined valuations has been committed to the chase before anyone has scratched the surface.

Scoring Functions All the Way Down

A large language model optimizes for a scoring function. During training, it learns to predict the next token in a sequence. During fine-tuning, it learns to produce outputs that human raters score highly. The system has no opinion about truth, no preferences of its own. It is maximizing a score. That’s it.

Look at the people running the companies that build these systems. The OpenAI team is optimizing for a scoring function too—next-round valuation. Every press release, every benchmark announcement, every carefully worded blog post about “progress toward AGI” is an output generated to maximize a score: the number that investors assign to the company. The mechanism is different. The motivations are the same.

We do the same thing. Status, security, belonging, position—we just don’t publish our objective functions. When an LLM optimizes for approval, we call it sycophancy and write papers about it. When a CEO optimizes for valuation, we call it leadership. When we do it, we call it having values.

It is scoring functions all the way down—but what is being optimized, and why?

Money Makes the World Go ‘Round

In February 2026, OpenAI closed a $110 billion funding round at a $730 billion pre-money valuation.[3] That’s not a typo—and they won’t be cash-flow positive until at least 2029.[4] A CNBC analyst described the anticipated $1 trillion IPO as a “perfection scenario that assumes AGI is imminent.”[5]

Anthropic—the company behind Claude, which I use daily and which has been instrumental in writing this very piece—closed a $30 billion round at a $380 billion post-money valuation two weeks earlier.[6] The combined valuation of just the top AI labs now exceeds $1 trillion. That’s more than the GDP of Switzerland.

Let’s be clear: the technology works. The question isn’t whether AI products are valuable—they are. The question is whether a 30x revenue multiple is justified by the products themselves, or whether it requires the AGI narrative to hold. What if what we’ve built is *merely* a spectacular, world-changing, genuinely transformative technology? One that is nonetheless not on a path to the supposed riches that accompany AGI.

The AI Now Institute, in a 2025 analysis of OpenAI’s business model, put this more precisely than I can: the structure of the Microsoft deal ensures that Microsoft will profit from nearly any outcome, and as a result “the deal’s structure, and the dream of AGI, are in ideal symbiosis.”[7]

In short: if the falcon is lead, the AI economy crashes.

The MacGuffin Changes in Every Act

Here’s the thing about a MacGuffin. It doesn’t need a stable definition. It just needs to motivate the next scene. AGI has been doing this for seven years, and its definition has changed in every act.

Act One: 2019. Microsoft invests $1 billion in OpenAI and becomes its exclusive cloud partner. As part of the deal, OpenAI inserts what becomes known as the “AGI clause”: if OpenAI’s board declares AGI has been achieved, Microsoft’s access to future models is restricted.[8] The clause is meant to protect OpenAI’s founding mission—that AGI should benefit all of humanity, not just one corporation. AGI feels comfortably distant, so Microsoft concedes the point. A MacGuffin in its purest form: important enough to write into a contract, undefined enough that nobody seems worried about it.

Then ChatGPT happens, and suddenly the falcon matters.

Act Two: 2023. Microsoft has now poured $13 billion into OpenAI. The philosophical definition is apparently not enough. The two companies quietly add a new trigger alongside the original clause: a “Sufficient AGI” threshold, under which OpenAI can revoke Microsoft’s exclusivity entirely when its AI systems generate at least $100 billion in profits.[9]

Let that settle. The original AGI clause still exists—the board can still declare AGI on philosophical grounds. But now, sitting right next to it in the same contract, is a mechanism that defines the most hotly debated concept in artificial intelligence as a profit threshold. AGI, in the contract that actually governs the most important partnership in the industry, is whatever the OpenAI board says it is, as long as it comes with $100 billion in profits.

The MacGuffin has been opened. Inside is a revenue target.

Act Three: October 2025. The partnership is restructured. The power to declare AGI—previously held solely by OpenAI’s board—now requires verification by an “independent expert panel.”[10] Microsoft’s IP rights are extended through 2032, including post-AGI models. The philosophical safeguard has acquired a chaperone, and the chaperone’s job is to make sure nobody declares AGI prematurely—or, depending on your read, to make sure nobody declares it at all.

Act Four: February 2026. Amazon commits $50 billion—$15 billion upfront, the remaining $35 billion contingent on what the SEC filing calls “certain conditions.”[11] The specific conditions are redacted. The Information reports the triggers may include OpenAI reaching an AGI milestone or completing an IPO.[12] When Altman is asked on CNBC, he says, “We’re not doing new deals that stop when AGI gets reached.”[13] Note the phrasing. He doesn’t say AGI isn’t in the contract. He says the deals don’t “stop.”

A few weeks later, Jensen Huang goes on the Lex Fridman podcast and declares, “I think we’ve achieved AGI.”[14] His definition: an AI that can build a billion-dollar business, even briefly—including a viral app that earns money and then shuts down. Nvidia controls roughly 80% of the AI chip market. Draw your own conclusions about why its CEO is declaring victory.

Philosophical safeguard. Profit target. Panel-verified declaration. Redacted trigger. Definitional fait accompli. The same three letters—no agreed-upon meaning—have been a weapon, a shield, a goalpost, and a checkbox, depending entirely on who was holding the pen and what scoring function they were maximizing.

So Wait, What Exactly Is AGI?

To the researchers—the people actually working on the science—AGI is not a MacGuffin. Its content matters enormously. The question of whether current architectures can achieve general intelligence is real, unsettled, and consequential. These people need AGI to mean something specific, because they’re trying to build it or prove it can’t be built. For them, the falcon’s composition is the whole point. AGI defined as $100 billion in profits and a vote of the OpenAI board is not going to satisfy their need to understand the technology.

For the contracts, the fundraising decks, and the SEC filings? AGI is a pure MacGuffin. Its content is irrelevant to its function. The definition changes in every act and the chase never stops. The 2019 clause didn’t need AGI to mean anything—it needed AGI to feel far away. The 2023 amendment didn’t need AGI to mean anything—it needed AGI to mean $100 billion in revenue. The 2026 filing doesn’t need AGI to mean anything—it needs AGI to remain redacted. What’s inside the falcon has never mattered to the deal.

The term itself has a revealing history. “Artificial general intelligence” was coined by researcher Mark Gubrud in 1997 as an academic distinction—a way of separating the dream of general machine intelligence from the narrow expert systems that had defined the field up to that point.[15] It lived in academic obscurity for over a decade. Then the AI industry seized it. As AI Now documented, OpenAI and its peers adopted AGI as a term “central to their marketing efforts,” constructing “an imaginary where an AGI of great power looms on the horizon” to frame their products as precursors to something transformative.[16] A term coined to describe a scientific aspiration became a marketing ploy.

OpenAI’s charter says AGI means “highly autonomous systems that outperform humans at most economically valuable work.” Their contract with Microsoft adds a $100 billion profit trigger. Huang says we already have it if you count viral apps. A recent paper in Nature argues LLMs already qualify.[17] A 2025 survey of leading AI researchers found that 76% believe current approaches are unlikely to achieve AGI.[18] The term means everything and nothing, which makes it perfect for a fundraising deck, but useless for science.

The Stuff That Dreams Are Made Of

I’m not saying AGI is impossible, though it would help if we could all agree on exactly what AGI is before making that judgment. I’m not saying the technology isn’t extraordinary—it is, and it has changed my own work in ways I find genuinely exciting.

What I’m asking is that you keep in mind that the people telling you AGI is imminent have an enormous financial interest at stake. That doesn’t make them bad people. It does make their confidence uninformative. Sam and Dario may sincerely believe AGI is around the corner, but their sincerity is not relevant.

Charlie Munger liked to say: show me the incentive and I’ll show you the outcome. The entire venture capital ecosystem depends on the next round being larger than the last, which depends on the story getting bigger, which depends on AGI remaining perpetually imminent. These people have an enormous financial incentive to frame what they’ve built as the precursor to something even larger—and a corresponding disincentive to say: “We’ve built an incredible tool. It’s not a mind. It may never become a mind. It’s going to change everything anyway, but you will likely lose money investing in the next round.”

Here’s a simple test. Ask the person making the AGI claim what evidence would convince them they’re wrong. If they can’t answer—if every failure is reframed as progress, every limitation as temporary, every moved goalpost as refinement—you are not in a scientific conversation. You are in a sales pitch.

I’m not a skeptic. I’m a pedant. There’s a difference. I am unbelievably excited about what these tools actually do. I just think we should notice when the people telling us what the tools are have a trillion dollars riding on inserting the letter G between the A and the I.

Gutman spent seventeen years chasing the falcon. It was lead. Spade handed it to the cops and walked out of the room. When they asked him what it was, he had the best answer anyone’s ever given about a MacGuffin.

“The stuff that dreams are made of.”

Sounds like AGI to me.

[1] Hitchcock first used the term MacGuffin publicly in a lecture at Columbia University on March 30, 1939 (typescript held at the Museum of Modern Art, Department of Film & Video; earliest citation in the Oxford English Dictionary). The lions-in-the-Scottish-Highlands anecdote appears in its fullest documented form in François Truffaut, Hitchcock/Truffaut (Simon & Schuster, 1967), based on interviews conducted in 1962. Hitchcock repeated the story in interviews with Richard Schickel (The Men Who Made the Movies, 1973) and Dick Cavett (1972), among others. The term is attributed to Hitchcock’s colleague, screenwriter Angus MacPhail.

[2] Dashiell Hammett, The Maltese Falcon (1930). Gutman’s pursuit spans seventeen years. The climactic reveal—the falcon is a lead forgery coated in black enamel—drives the final act. Bogart’s “stuff that dreams are made of” line is in John Huston’s 1941 film adaptation; it’s not in Hammett’s novel.

[3] OpenAI closed $110 billion in funding at a $730 billion pre-money valuation ($840 billion post-money) in February 2026. Amazon invested $50 billion, Nvidia $30 billion, SoftBank $30 billion. The round subsequently expanded to $122 billion at an $852 billion valuation by March 31. CNBC, February 27, 2026; Bloomberg, February 27, 2026; CNBC, March 31, 2026.

[4] OpenAI does not expect to turn profitable until at least 2029. Projected losses from 2023 to 2028 total approximately $44 billion. The Information; TechCrunch.

[5] Nick Patience, AI coverage lead at The Futurum Group, quoted by CNBC, January 6, 2026: the $1 trillion IPO figure relates to a “perfection scenario that assumes AGI is imminent.”

[6] Anthropic closed a $30 billion Series G round at a $380 billion post-money valuation on February 12, 2026. Led by GIC and Coatue, with participation from Microsoft, Nvidia, D. E. Shaw, Founders Fund, and others. CNBC, February 12, 2026; Bloomberg, February 12, 2026.

[7] Brian Merchant, “AI Generated Business: The Rise of AGI and the Rush to Find a Working Revenue Model,” AI Now Institute, April 2025. See also AI Now Institute, “The AGI Mythology: The Argument to End All Arguments,” Chapter 1.1 of Artificial Power, June 2025.

[8] The “AGI clause” was included in the 2019 Microsoft–OpenAI partnership agreement, announced July 22, 2019. OpenAI’s charter defines AGI as “highly autonomous systems that outperform humans at most economically valuable work.” Under the original clause, an AGI declaration by OpenAI’s board would restrict Microsoft’s access to post-AGI models. OpenAI press release, July 22, 2019; TechCrunch, July 22, 2019; Stanford CodeX analysis, March 2025.

[9] In 2023, Microsoft and OpenAI added a “Sufficient AGI” trigger: if OpenAI’s AI systems generate at least $100 billion in profits, OpenAI can revoke Microsoft’s exclusivity entirely. This is separate from the original AGI clause, which remains tied to a board declaration. First reported by The Information, December 2024; confirmed by TechCrunch, December 26, 2024.

[10] Under the October 2025 restructuring, AGI is still declared by OpenAI, but any declaration must now be verified by an independent expert panel. Microsoft’s IP rights were extended through 2032, including post-AGI models. Microsoft Official Blog, October 28, 2025; OpenAI announcement, October 28, 2025.

[11] Amazon’s $50 billion investment in OpenAI is structured as $15 billion upfront and $35 billion “in the coming months when certain conditions are met.” OpenAI press release, February 27, 2026; SEC filing reviewed by GeekWire, February 2026. The specific conditions are redacted.

[12] The Information reported on February 25, 2026, citing people familiar with the matter, that the $35 billion tranche may be contingent on OpenAI either achieving an AGI milestone or completing an IPO. Reuters confirmed the reporting.

[13] Sam Altman, interview on CNBC’s “Squawk Box,” February 27, 2026: “We’re not doing new deals that stop when AGI gets reached.”

[14] Jensen Huang, Lex Fridman podcast, released March 22, 2026: “I think it’s now. I think we’ve achieved AGI.” In late 2023, Huang had predicted AGI was five years away. Yahoo Finance, March 24, 2026; Fortune, March 30, 2026.

[15] Mark Gubrud coined “artificial general intelligence” in a 1997 paper at the Fifth Foresight Conference on Molecular Nanotechnology. Shane Legg independently reinvented the term in the early 2000s while collaborating with Ben Goertzel. See Scientific American, November 20, 2025; AI Now Institute, “AI Generated Business,” April 2025.

[16] AI Now Institute, “The AGI Mythology: The Argument to End All Arguments,” Chapter 1.1 of Artificial Power, June 2025.

[17] Eddy Keming Chen et al., “Does AI already have human-level intelligence? The evidence is clear,” Nature 650 (2026): 36–40. For a rebuttal, see Gary Marcus, “Rumors of AGI’s arrival have been greatly exaggerated” (February 2026).

[18] Survey published in Nature, March 4, 2025: 76% of leading AI researchers considered it “unlikely” or “very unlikely” that current LLM approaches alone would achieve AGI.

Jeff Reid is a retired scientist, old school nerd, co-founder of the Regeneron Genetics Center, and writes Tears in Rain (tearsinrain.ai), a weekly Substack about AI, human-AI relationships, and the gap between what these systems are and what we need.