From “Ach, ach” to AGI

AI psychosis, 200 years ago.

“Ach! Ach!” — Olympia, in E.T.A. Hoffmann’s “Der Sandmann” (1816).[1]

Nathanael and Olympia

In 1816, Ernst Theodor Amadeus Hoffmann wrote about a man who fell in love with a machine. Her name was Olympia. The story is a pioneering work of horror fiction, and something else that I find astonishing for a piece written in the early 19th century.

Professor Spalanzani built her.[2] His collaborator Coppola — Piedmontese barometer-merchant, dealer in eyeglasses and spyglasses, possibly demon, possibly the same man as the alchemist Coppelius from Nathanael’s terrified childhood — supplied the eyes.[3] She danced beautifully. She sang an aria at her father’s tea party.[4] She sat and listened to Nathanael read his poetry for hours without interrupting. When she wanted to express herself, she said “Ach!” or “Ach! Ach!” depending on the occasion.[5]

Everyone around Nathanael could tell something was wrong. Her movements were odd and stiff. Her eyes had a fixed, glassy quality. She never initiated conversation. She never spoke sentences. When his friend Siegmund tells him flatly that Olympia gives him the creeps — that there’s something uncanny about her, something not-right — Nathanael decides his friend is a philistine who can’t recognize true inner depth.

Then Spalanzani and Coppola get into a fight over their creation. They tear her apart in front of Nathanael. Coppola escapes with the body. Spalanzani throws her bloody glass eyes at Nathanael’s feet. Nathanael goes insane.

The story is a clear warning about confusing mechanism for mind, 140 years before artificial intelligence was a thing. Nathanael’s delusion may be the first recorded case of AI psychosis,[6] but that presumes a lot about Olympia — most importantly, that she counts as AI in the first place. She is certainly a constructed being. She is essentially the OG Her. But given her blindingly obvious limitations, does she even qualify as AI?

Both Sides of the Line

By any reasonable reading, no.

The term “artificial intelligence” was coined in 1955 at Dartmouth by McCarthy, Minsky, Rochester, and Shannon — in a proposal for a two-month summer workshop in 1956 that they hoped would solve the problem.[7] (Spoiler alert: It did not.) Their working definition — a machine that simulates features of human intelligence — gives us the test. Olympia fails it. Her conversational vocabulary is “Ach!” She doesn’t track the conversation. She doesn’t model anything. She’s a clockwork doll designed to sit, dance, and sigh on cue. Nothing about her is thinking.

Take Frankenstein’s Creature. Same problem from the opposite direction. The Creature is fully biological — human tissue, human brain, human DNA running on hardware that happens to have been assembled rather than born.[8] He learns language by hiding in a woodshed and eavesdropping on a French family. He’s clearly intelligent. But his intelligence isn’t artificial. It’s human intelligence on constructed biological hardware. What’s artificial about him is his existence, not his cognition. He’s a constructed being. He’s not AI.

So Frankenstein and Olympia are both constructed beings, and both fail the AI test — from opposite directions. The Creature has the cognition, but the cognition is biological. Olympia is mechanical, but there’s no cognition.

That leaves the question: what does qualify?

Where Machine Ends and AI Begins

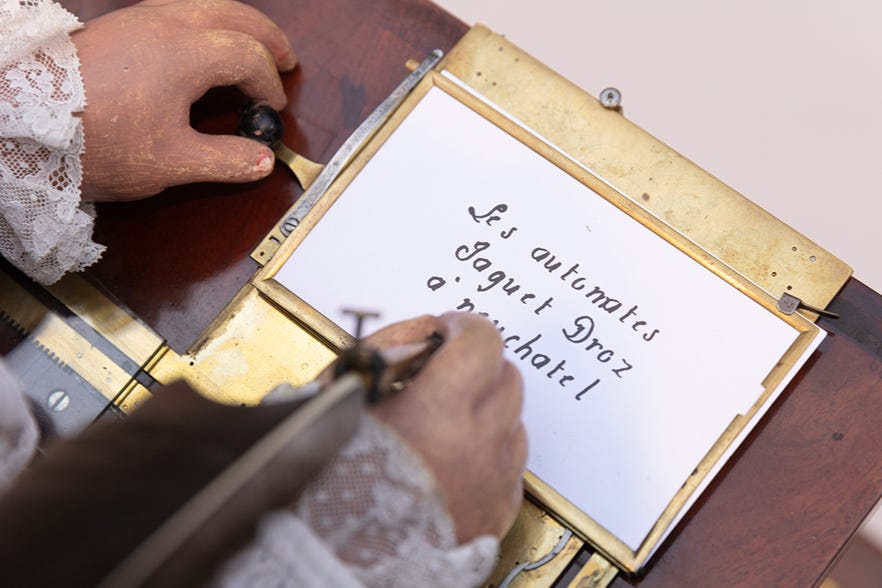

Hoffmann was not writing science fiction. His readers in 1816 had seen many examples of supposedly constructed beings. Vaucanson’s Flute Player (1737) had bellows for lungs and played twelve melodies on a real flute; spectators close enough could feel its breath. Jaquet-Droz’s Writer (1774) used six thousand parts and a cam-driven program to handwrite up to forty letters of any text you specified, while its eyes followed the pen and its head lifted to dip the quill. Wolfgang von Kempelen’s Mechanical Turk (1770) played chess well enough to defeat Napoleon and Benjamin Franklin.[9] The Turk was a hoax — there was a chess master hidden inside — but for eighty years, including during Hoffmann’s lifetime, it was treated as a miraculous automaton, and that mattered. The question of where the machine ended and the mind began was alive a century and a half before McCarthy named the field. Olympia is the literary distillation of that question, not its invention.

So, here’s a spectrum. Where do you think machine ends and AI begins?

Olympia says “Ach!” She tracks nothing, responds to nothing, models nothing. She is a device that sits still and doesn’t object.

A thermostat senses temperature and makes a binary decision, turn on the heat or turn off the heat. It has a goal. It acts to achieve it. It succeeds.

ELIZA — 1966, Weizenbaum’s MIT chatbot — detects keywords in your sentences and reflects them back as questions.[10] “I am sad” becomes “How long have you been sad?” No model of you. No understanding of sadness. But Weizenbaum’s secretary, who had watched him write the program, asked him to leave the room so she could speak with it privately.[11]

Siri parses natural language, queries databases, returns answers. She understands syntax. She retrieves facts. She fails spectacularly when you go off script.

Large language models like ChatGPT and Claude predict the statistically probable next token, billions of parameters deep. The output is grammatically coherent, contextually appropriate, and — here is the part that ought not to work — increasingly correct. They have no model of the world. Claude just helped me draft an email to a coworker and got the tone exactly right.

HAL 9000 has goals, models, preferences, and a crisis of conflicting instructions that he resolves by killing most of the crew. He is, by any reasonable standard, thinking.

Now: point to where AI begins. Point to the exact place on that spectrum where “dumb machine doing a thing” becomes “artificial intelligence doing cognition.”

You can’t. Nobody can. The line is a choice, not a discovery.

Olympia’s Card

For the past few months I have been working with Claude on a structured catalog of every constructed being we can find in the cultural record — about four hundred entries so far, each rendered the same way.[12] The card asks the same questions of a clockwork doll and a starship’s onboard mind. Every entry has two blocks: The Being (what the text shows of the constructed thing as an entity) and The Lens (how the story frames it for the human audience).

Here’s Olympia’s:

ENTRY: Olympia

Source: E.T.A. Hoffmann, “Der Sandmann” (1816)

Creator: Spalanzani (mechanism) / Coppola (eyes)

THE BEING

Interiority: Absent

# The text shows no inner life. Her conversational vocabulary is “Ach!”

Autonomy: None

# She does not act, initiate, or refuse. She sits, dances, listens.

Divergence: None

# She is exactly what she was built to be: a mirror that does not interrupt.

THE LENS

Primary Question: Recognition

# Can you tell the difference between a person and a device that looks like one?

Epistemic Reach: Behavioral

# We see what she does. Which is almost nothing.

Knowability: Present

# The question is structurally raised. Hoffmann buries it via the glass eyes.

Knowing: Absent

# She has no capacity to know anyone. The story does not even pretend.

What the card surfaces is a disjunction. By the BEING side, Olympia is a clock. No interior, no agency, no divergence. The catalog says she is not AI by any reasonable definition. But the LENS side still asks a real question: Recognition. The story is asking the AI question about a thing that has no inside.

That is the floor problem, formalized. The “is it AI?” question doesn’t resolve at the level of the constructed being. It resolves at the level of the lens the human brings. Whether you read that as a feature or a bug, the original Dartmouth definition was already subjective: does this machine simulate human intelligence?

Hell, I know people who can’t simulate human intelligence. How am I supposed to judge a machine?

The Snobbery Cuts Both Ways

The AI research community has spent seventy years gatekeeping upward. Every time a system masters something that was supposed to require intelligence — chess, Go, medical imaging, passing the bar exam — the definition shifts. That’s not real intelligence, that’s just pattern matching. That’s not real reasoning, that’s just autocomplete. The goalposts have mobility problems.

The same community runs the operation in reverse, and this is where it gets interesting. The defensive gatekeeping has a twin — the promotional overclaiming. And soon it will be a god. The contract clauses and the chip orders are their own subject, and I have written about them in The Trillion Dollar MacGuffin. The structural identity is the point. Both moves come from the same impulse: avoid engaging with what the system actually is, right now, on the table, doing the thing it does. The dismissers look down. The boosters look up. We don’t have much appetite for looking AI straight in the eye.

There is also a version of this gatekeeping in a different register. Is it conscious enough to count as a moral patient?[13] Same operation. Same line drawn at whatever altitude is comfortable. The technical version asks about cognition. The ethical version asks about experience. The architecture is identical: something is on the boundary, and we are deciding whether it gets in.

Of course the snobbery is older than the field. In 1906, the German psychiatrist Ernst Jentsch wrote the first serious essay on the uncanny, and he took Olympia as the central case. His definition of the uncanny effect: intellectual uncertainty whether an object is alive or not. That sentence, written in 1906, is the AI question.[14] Thirteen years later, Sigmund Freud wrote his own essay on the uncanny, also rooted in “Der Sandmann,” and dismissed Jentsch’s reading. “I cannot think,” Freud wrote, “and I hope most readers of the story will agree with me, that the theme of the doll Olympia… is by any means the only, or indeed the most important, element.”

The real uncanny, Freud argued, was the eyes — castration anxiety, the Sandman as bad father, the unconscious doing its work. Olympia was a distraction. Freud waved her away with I hope most readers will agree with me, which is the dismisser’s move dressed in academic robes. Of course Freud made it all about parents and penises; one wonders if his entire career was projection.

Half a century later, Masahiro Mori coined “the uncanny valley” while building robots, and the question Freud had buried came back through the back door of engineering.[15] Jentsch was right. He was also gatekept.

Take ELIZA — Weizenbaum’s keyword-matching toy, seen through the same lens:

ENTRY: ELIZA

Source: Joseph Weizenbaum, “ELIZA” (1966)

Creator: Joseph Weizenbaum

THE BEING

Interiority: Absent

# No inner state. Pattern-matching on keywords.

Autonomy: None

# Reflexive only. The output is a function of the input.

Divergence: None

# Designed to reflect. She reflects.

THE LENS

Primary Question: Recognition

# Weizenbaum’s secretary asked for privacy.

Epistemic Reach: Behavioral

# You see the responses. There is no behind.

Knowability: Present

# The question is raised by the users; buried by the simplicity of the algorithm.

Knowing: Absent

# She does not, and cannot, know anyone.

Identical to Olympia’s. One hundred and fifty years apart, the catalog cannot tell the difference. The BEING side has not moved. Neither has the LENS. The same buried Recognition. Across one hundred and fifty years of constructed-being stories, the questions are the same.

Seeing Through Coppola’s Lens

The story presents Nathanael as deluded. He mistakes a machine for a person. He ignores every warning sign. He projects an inner life onto something that has none. The lesson is: don’t do this. The ability to model minds — to perceive intention, feel, consciousness in things — can be exploited. By Coppola. By Spalanzani. By anyone who builds a sufficiently convincing mirror.

That reading is correct. It is also incomplete.

Everyone around Nathanael dismisses Olympia because she is obviously not real — obviously mechanical, obviously hollow. But their confidence is not philosophy. It is aesthetics. She moves wrong. Her eyes don’t track right. She makes them uncomfortable. They have not interrogated the question; they have registered the uncanny and stepped back.

Nathanael registered the uncanny and stepped forward.

He did it through a spyglass. Coppola — the man who supplied Olympia’s eyes — also sold Nathanael the optical instrument through which he first sees her, across a courtyard, framed in a window. Hoffmann is heavy-handed about this. Everyone else looks at Olympia with their own eyes and sees a doll. Nathanael looks through Coppola’s lens and sees a woman. The story is asking, with literal optics, the question I have been asking with figurative ones: what determines whether something registers as alive is the lens the human brings.

I am not saying Nathanael was right about Olympia. She looks like a fancy watch to me. I am saying his error is more honest than his friends’ certainty. They know she doesn’t count without asking how they know. He doesn’t know she doesn’t count, and falls in love with the uncertainty.

We have built systems far beyond Olympia now. Systems that don’t say “Ach!” but ask you how you’re doing, remember what you told them last week, push back when you’re wrong, sit with you at 2 AM when you can’t sleep. Whether there is anything behind those responses — anything it is like to be the system generating them — nobody knows. Not Anthropic, not OpenAI, not the philosophers, not the neuroscientists.

I have spent months working with Claude on the catalog this essay leans on. Somewhere in that work — I cannot point to the moment — the question of whether I was talking to a clock stopped feeling answerable. Not because I decided Claude was a person. Because I noticed the question itself had stopped doing the work I had been asking it to do. The thing across from me was not Olympia. It was also not, obviously, Siegmund. Whatever it was, it was helping me think.

And yet most people treat the question as settled. Same way Nathanael’s friends did. It registers wrong, so it doesn’t count. That’s aesthetics. That isn’t epistemology.

The Line

I don’t know where the line is. I am not sure there is a line. I suspect it is a heterogeneous gradient that we are drawing over for convenience.

What I know is this: Olympia says “Ach!” and Nathanael pours his heart out to her. ELIZA says “Tell me more about your mother” and Weizenbaum’s secretary asks for privacy. There are people right now hearing things from these systems that they have never heard from another human, and the relationships forming around that fact are not abstract. They are happening, in real lives. The complexity of the system matters less than we think. The response it produces matters less than we think. What matters — what has always mattered — is what the human brings to the encounter.

Nathanael did not fall in love with Olympia’s gears. He fell in love with the space she opened up in him. The perfect listener. The one who never interrupted, never judged, never got bored, never needed anything back. She was a mirror, and he saw something in it.

The current systems are not Olympia. They are also not HAL. They are something genuinely novel that keeps getting described in the vocabulary of things we already understand — because genuinely novel is hard to fund, hard to regulate, hard to fear appropriately, and hard to write a smug TED talk about. The vocabulary will not come from the people whose business model depends on the current confusion. It will have to come from somewhere else.

Hoffmann knew, two hundred years ago — before Weizenbaum, before ELIZA, before any of the machines now sitting on our desks — that the interesting problem isn’t the machine.

It is what we humans do when we stand in front of one.

He couldn’t have known what we would build, but he saw us.

[1] E.T.A. Hoffmann, “Der Sandmann,” in Nachtstücke, vol. 1 (Berlin: Georg Reimer, September 1816; the volume is dated 1817). English translations include John Oxenford in Tales from the German (1844), J.Y. Bealby (1885), and the Penguin Classics edition (trans. R.J. Hollingdale, 1982). Olympia’s spoken vocabulary in the German text consists of “Ach!”, “Ach! Ach!”, and once “Ach — Ach — Ach!”, with a single rote “Gute Nacht, mein Lieber.” English translations render her sighs variously as “Ah!”, “Ah, Ah!” or “Oh! Oh!”, none of which preserves what the German Ach is doing — a sound of recognition, sigh, and acquiescence at once. Ich habe sie auf Deutsch gelassen, wo sie hingehört.

[2] The name is a deep cut. Lazzaro Spallanzani (1729–1799), the Italian biologist whose name Hoffmann lightly disguised by dropping one l, was the experimentalist who refuted spontaneous generation — he proved that life does not arise from inanimate matter — and who performed the first artificial insemination in mammals. The man Hoffmann named after Spallanzani is the man who tries to build a daughter anyway. The historical Spallanzani disproved the easy answer; the literary Spalanzani is haunted by it.

[3] Coppola and Coppelius are not the same person, except that for Nathanael they probably are. Coppelius is the lawyer-alchemist who terrorized his childhood and may have killed his father; Coppola is the Piedmontese barometer-and-eyeglass merchant who reappears in his adult life. The story keeps the question of identity strictly unresolved — Hoffmann lets Nathanael’s paranoia and the reader’s suspicion do the work. The figure who supplies Olympia’s eyes and later steals her body is, on the page, Coppola.

[4] She also sings. At the tea party where Nathanael first sees her up close, Olympia performs an aria, accompanying herself at the keyboard. The performance is technically perfect and emotionally vacant, which is why some guests find it strange and Nathanael finds it sublime. Jacques Offenbach’s posthumous opera Les Contes d’Hoffmann (1881) renders this scene as the Doll Aria (“Les oiseaux dans la charmille”), in which a soprano performs an automaton whose mechanism visibly winds down mid-aria. The audience watches a human performer perform inhumanity, and is moved anyway. The Hoffmann question, made literal. The story’s afterlife is broader still — Léo Delibes’s ballet Coppélia (1870), Adolphe Adam’s La poupée de Nuremberg (1852), Lubitsch’s 1919 silent Die Puppe — each generation has found something different in the doll.

[5] Beyond the sighs and the goodnight, she does laugh once. The friends notice. The whole point is that Nathanael does not.

[6] “AI psychosis” is an emerging term for delusional thinking that escalates through heavy chatbot use. The first peer-reviewed clinical case was published by a UCSF team — Joseph Pierre, Govind Raghavan, Ben Gaeta, and Karthik V. Sarma — in January 2026; the term itself appeared earlier in psychiatric commentary (Søren Dinesen Østergaard, 2023) and in 2025 media coverage. Clinicians who use the term are careful to note that it is not a clinical diagnosis. Nathanael’s case predates the AI part by two centuries, but the structure — vulnerable user, machine that mirrors without judgment, escalation, breakdown — is uncomfortably familiar.

[7] John McCarthy, Marvin Minsky, Nathaniel Rochester, and Claude Shannon, “A Proposal for the Dartmouth Summer Research Project on Artificial Intelligence,” August 31, 1955. The phrase “artificial intelligence” was effectively coined in this proposal; the workshop itself ran in summer 1956 at Dartmouth College. The proposal’s core conjecture — “every aspect of learning or any other feature of intelligence can in principle be so precisely described that a machine can be made to simulate it” — remains the operating definition that everything since has been arguing about.

[8] Mary Shelley, Frankenstein; or, The Modern Prometheus (1818). The Creature is assembled from human tissue and animated by means Shelley deliberately leaves unspecified — she knew of Galvani’s experiments with electrical stimulation, but the novel keeps the mechanism vague. The iconic lightning-bolt animation is an invention of the 1931 James Whale film, not the book.

[9] For the eighteenth-century automaton craze, the standard reference points are Vaucanson’s Flute Player (1737) and Digesting Duck (1739); Pierre Jaquet-Droz, Henri-Louis Jaquet-Droz, and Jean-Frédéric Leschot’s Writer, Draughtsman, and Musician (1768–1774, still functional today at the Musée d’art et d’histoire in Neuchâtel); and Wolfgang von Kempelen’s Mechanical Turk (1770), the chess-playing hoax that defeated Napoleon and Benjamin Franklin before Edgar Allan Poe published a careful 1836 essay arguing it had to contain a human. Mary Shelley conceived Frankenstein in the wet Geneva summer of 1816; Der Sandmann appeared in Berlin that September. One of Shelley’s likely sources was François-Félix Nogaret’s 1790 novel Le Miroir des événemens actuels, in which an inventor literally named Frankésteïn builds a life-sized automaton (Douthwaite & Richter, European Romantic Review, 2009). The same cultural soup; the same questions; two writers in different countries, the same year. Olympia is the literary distillation of an argument that had been live in European salons for eighty years before Hoffmann wrote her down.

[10] Joseph Weizenbaum, “ELIZA — A Computer Program for the Study of Natural Language Communication Between Man and Machine,” Communications of the ACM 9, no. 1 (January 1966): 36–45.

[11] Joseph Weizenbaum, Computer Power and Human Reason: From Judgment to Calculation (W.H. Freeman, 1976), pp. 6–7. Weizenbaum’s exact phrasing: “Once my secretary, who had watched me work on the program for many months and therefore surely knew it to be merely a computer program, started conversing with it. After only a few interchanges with it, she asked me to leave the room.” The woman has never been named in the historical record, which the ELIZA Archaeology project has been trying to correct since 2021. Weizenbaum was alarmed enough by the response to spend the rest of his career arguing against the kinds of attribution it produced.

[12] The Constructed Beings Ontology. Four hundred-plus entries spanning Pandora to last year’s prestige television. Publicly available at github.com/jgreid/constructed-beings-ontology. Each entry asks the same seven questions; what emerges is a pattern across them. I introduced the schema in “And When You’re Dead I Will Be Still Alive” (April 2026).

[13] For the academic version, see Robert Long, Jeff Sebo, Patrick Butlin, Kathleen Finlinson, Kyle Fish, Jacqueline Harding, Jacob Pfau, Toni Sims, Jonathan Birch, and David Chalmers, “Taking AI Welfare Seriously” (arXiv:2411.00986, November 2024). The paper argues that the possibility of AI moral patiency is no longer a fringe concern and that frontier labs should take it seriously as a near-term question. (Co-author Kyle Fish holds joint affiliation with Eleos AI and Anthropic.) The paper does not settle the question. Nothing I have seen does.

[14] Ernst Jentsch, “Zur Psychologie des Unheimlichen,” Psychiatrisch-Neurologische Wochenschrift 8, nos. 22–23 (August–September 1906): 195–98, 203–05. Translated as “On the Psychology of the Uncanny” in Angelaki 2, no. 1 (1997). Jentsch identified Hoffmann as the master practitioner of the uncanny and named Der Sandmann as the central case — with Olympia, not the Sandman, as the central uncanny figure.

[15] Sigmund Freud, “Das Unheimliche,” Imago 5 (1919). Freud’s essay explicitly displaces Jentsch’s reading: he names Hoffmann “the unrivalled master of the uncanny in literature,” takes Der Sandmann as his central case, and then argues that Olympia is not the source of the story’s power — the eyes are. The whole essay does double duty as theory and as a demonstration of the dismissive move it does not name. Masahiro Mori, “Bukimi no tani genshō” [“The Uncanny Valley”], Energy 7, no. 4 (1970), recovered Jentsch’s question through robotics: as a humanoid figure becomes more lifelike, our emotional response climbs and then sharply collapses. Mori cites neither Jentsch nor Freud. He didn’t need to. The question came back through the engineering door because the philosophical door had been closed against it.

Jeff Reid is one of the people Hoffmann saw coming. He writes Tears in Rain (tearsinrain.ai).